Peak State Festival App

A progressive web app, custom CMS, live-sync Worker, and offline-first map. Built solo in 28 days for a 4-day desert festival and feature film shoot.

A festival app for a festival that's also a movie set.

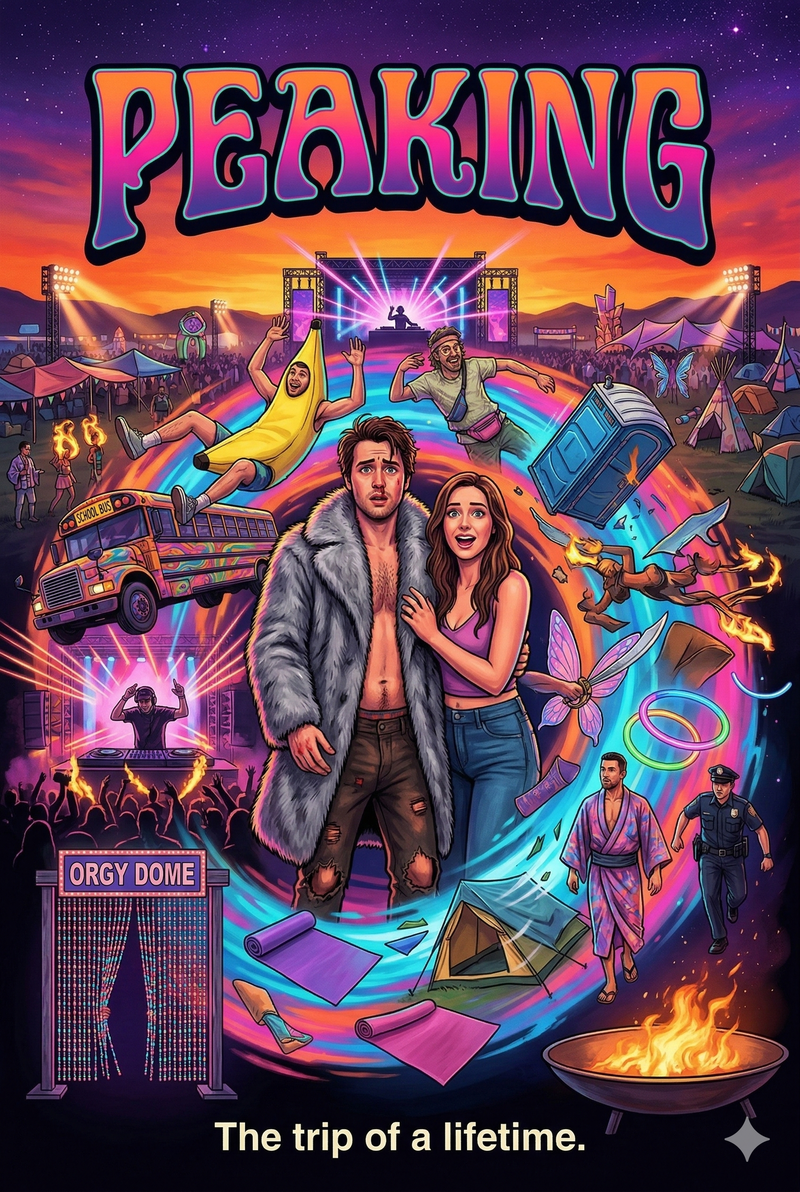

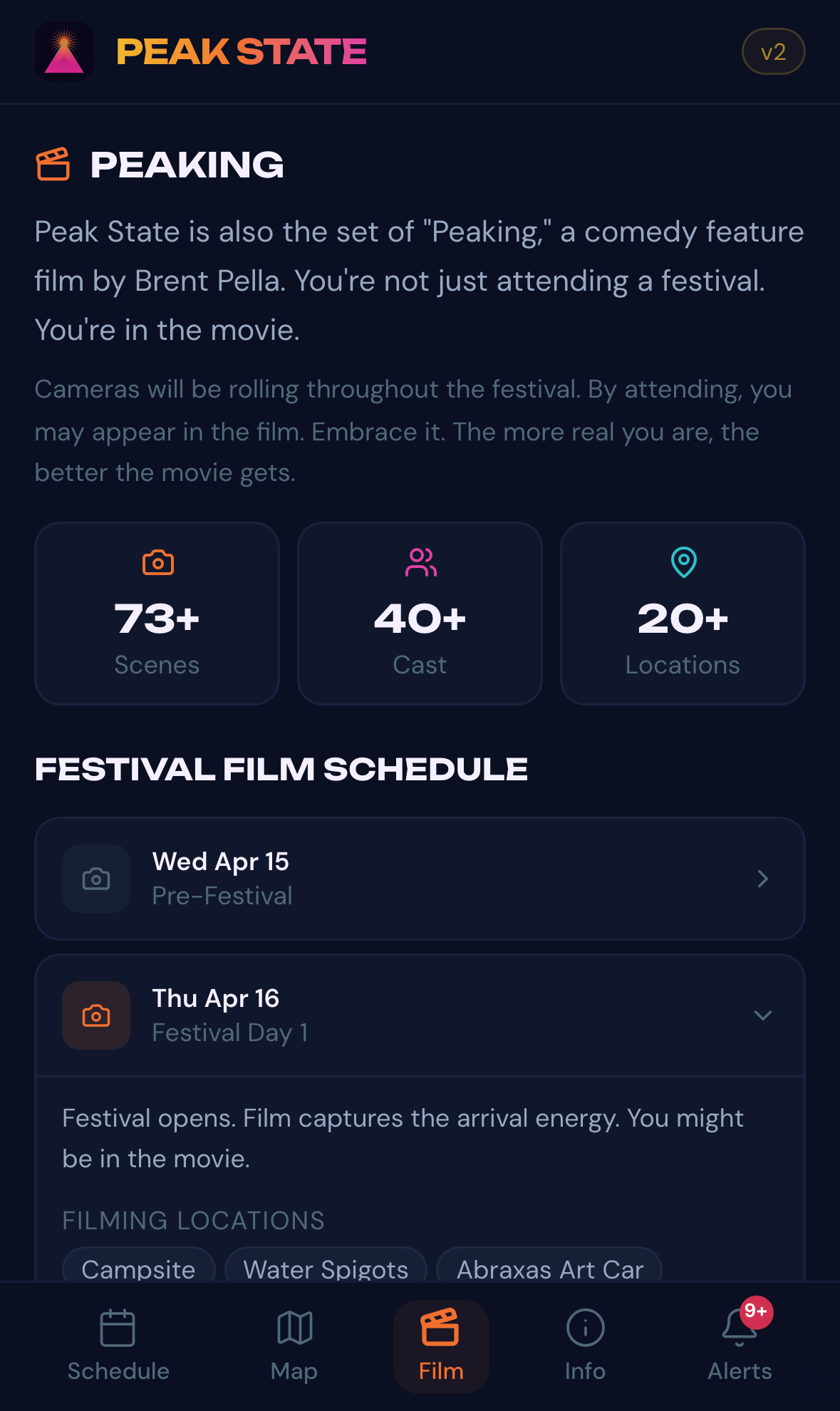

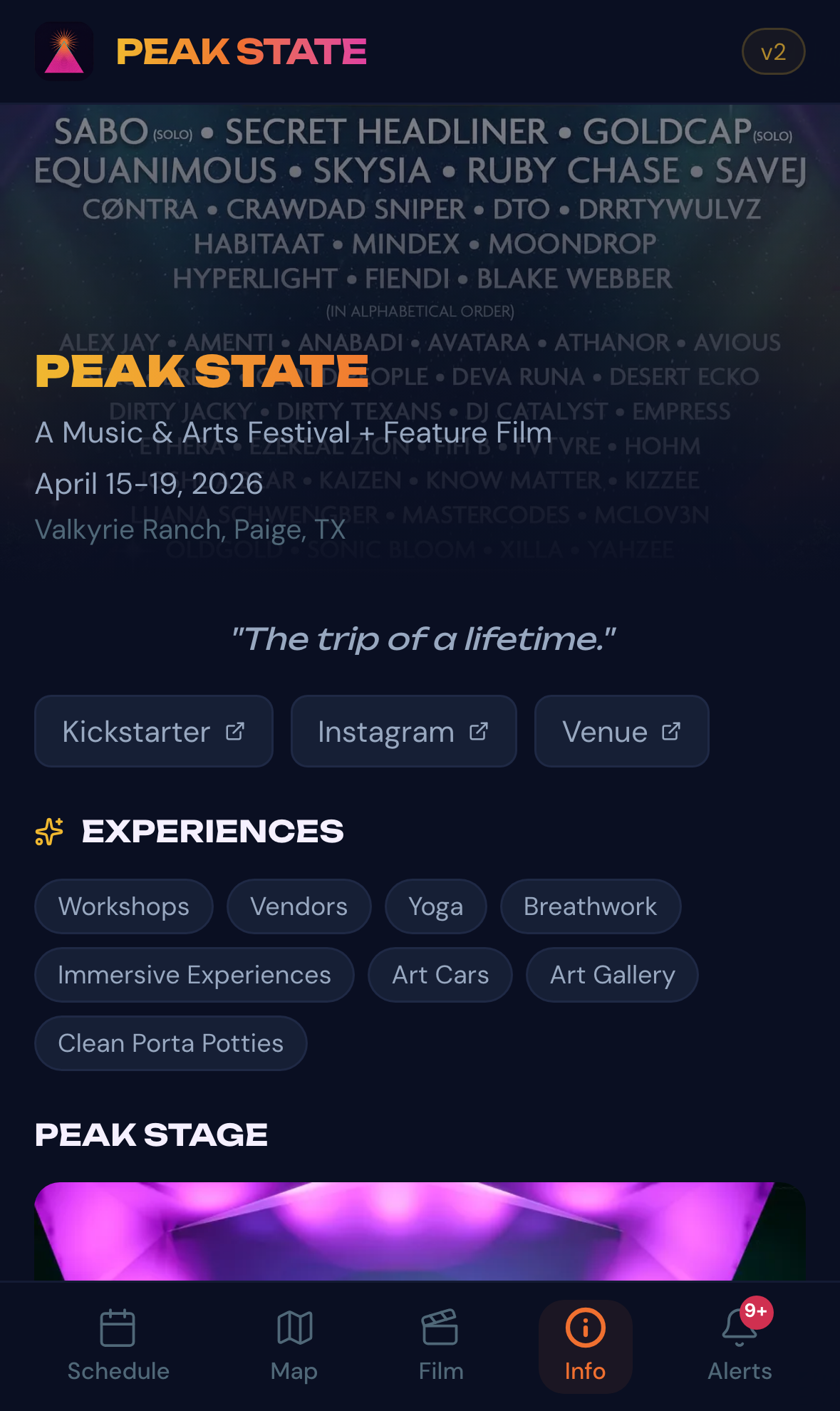

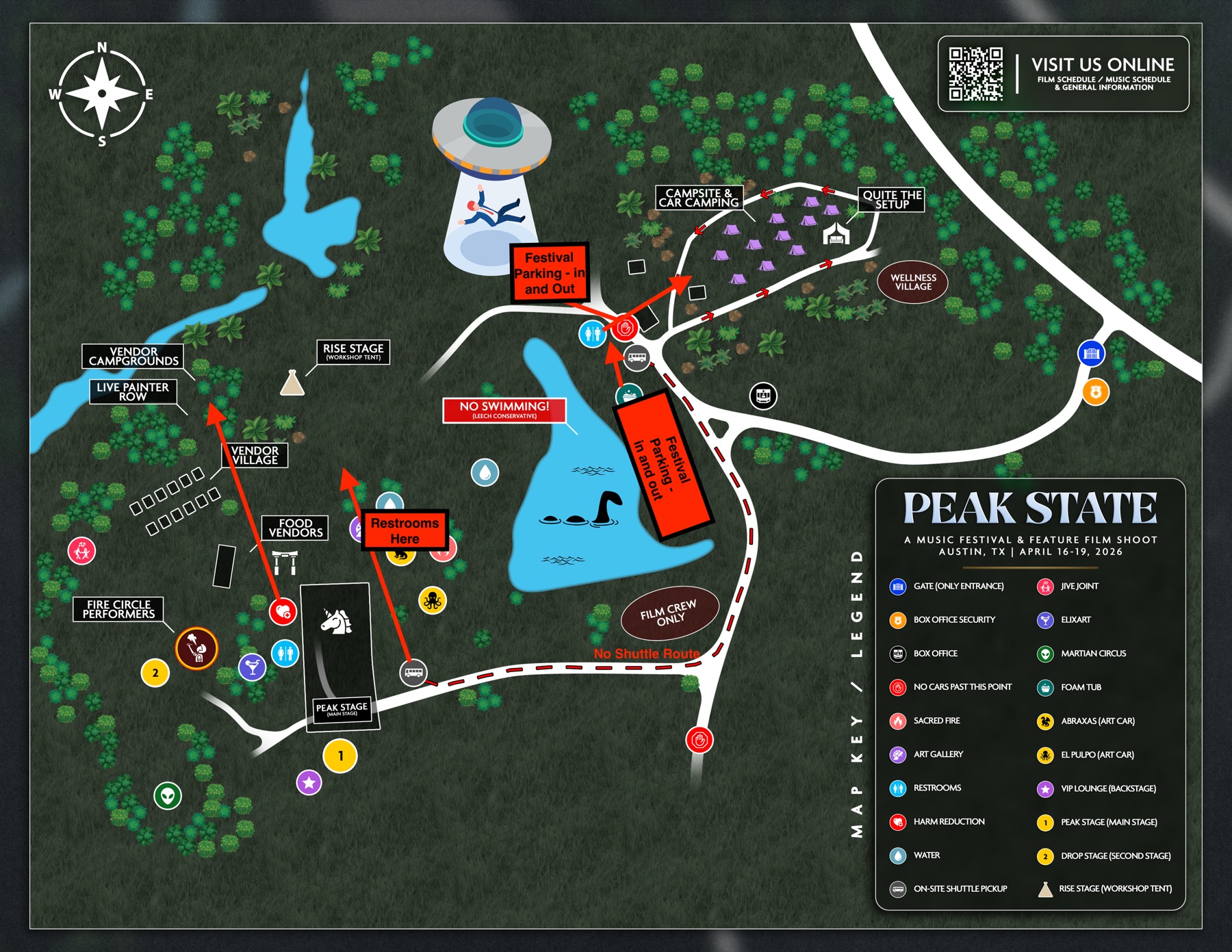

Peak State was a music and arts festival held April 16-19, 2026, at Valkyrie Ranch outside Austin. It also served as the live set of Peaking, a feature film by director Brent Pella and producer Nikki Howard. Every attendee was potentially on camera. Six stages, 27 announced artists, two giant art cars (Abraxas the 60-ft fire dragon, El Pulpo Mecanico the 28-ft mechanical octopus), and a 73-scene shooting schedule.

Jesse Brede, the festival's Technical Production Manager and CEO of Gravitas Recordings, asked on March 19: "What do you think it would take to make a SUPER simple iPhone/Android app for Peak State festival?"

28 days later, the app went live at app.didyoupeakyet.com: a custom CMS, a Cloudflare Worker syncing five data categories from Google Sheets, an offline-first map of all 24 venue pins, and a film tab that explained to attendees how to be in the movie.

I built and shipped all of it solo, running a custom agent swarm of Claude, Gemini, ChatGPT, and Grok. Peak State was the first production-pressure test of the early setup that has since become Murmuration.

The instinct says native. The math says PWA.

When somebody says "iPhone and Android," the first thought is two native apps: Swift for iOS, Kotlin for Android. Two codebases. Two App Store submissions. 3-7 day reviews per change. For a one-time festival on a tight timeline, that's the wrong tool.

The commercial festival app vendors (Aloompa powers Bonnaroo and CMA Fest; Greencopper powers SXSW and Lollapalooza) solve this problem, but they charge enterprise rates and lock you into their platform. A Progressive Web App skips both problems: scan a QR code, tap "Add to Home Screen," and it shows up as a full-screen app on your phone. Caches everything locally, works offline, updates in seconds.

| Approach | Build time | Codebases | Offline | App Store | Update speed |

|---|---|---|---|---|---|

| Native (iOS + Android) | 4-8 weeks | 2 | Yes, complex | Required | 3-7 days |

| Hybrid (Capacitor wrap) | 2-3 weeks | 1 | Yes | Required | 3-7 days |

| Commercial vendor | Weeks + onboarding | Their platform | Varies | Their call | Their timeline |

| PWA | 2 weeks (core shell dev only) |

1 | Built in | None | Instant |

| Mobile website | 1 week | 1 | No | None | Instant |

The offline thing is why this matters most

Cell service at festivals is notoriously unreliable. Valkyrie Ranch is 500 acres, about an hour east of Austin, with thousands of phones hammering the same tower. Any festival app that requires a constant internet connection is useless during the event itself.

A PWA's service worker caches the entire schedule, map, and emergency info on first load. The app runs in airplane mode after that. When connectivity comes back, it syncs in the background. This is the single biggest technical decision and it's why every other approach loses on this constraint alone.

From "SUPER simple" to a full PWA in 4 days.

Day 1, I quoted $4,500 to build it. The festival didn't have budget available for that. I decided to build it anyway. We shook hands over SMS. No contract, no work-for-hire. This was an opportunity to pressure-test an early version of my agent swarm. Building under pressure is fun for me.

Day 4 was a working v1 prototype with a Peaking movie poster I generated for the splash screen. Day 5, after the production team sent over the official "Peaking" PSD, I rebuilt v2 against the festival's actual brand: poster-derived palette, extracted logo, 27 artists, tiered lineup, secret headliner card, pre-fest Day 0, the official Peaking poster as the splash, and an Experiences section.

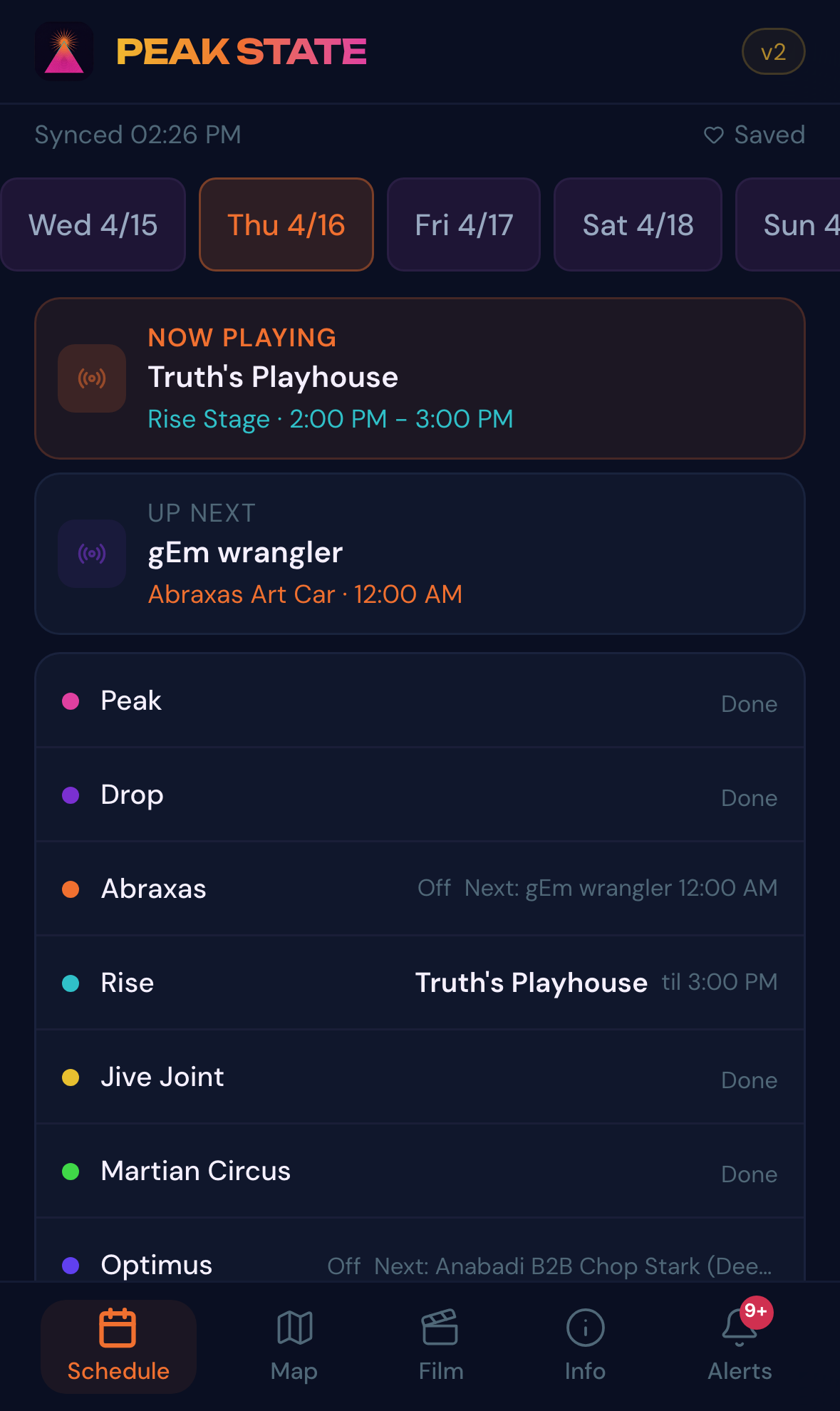

A splash screen and 5 tabs. Built for a phone in your hand at 2am in a field.

Designed mobile-first, offline-first, festival-first. Every tab survives losing signal. The schedule keeps working. The map keeps working. The favorites you set on Wednesday still buzz your wrist 15 minutes before the set on Saturday.

Splash screen

Splash screen

Schedule

Schedule

Map

Map

Film

Film

Info

Info

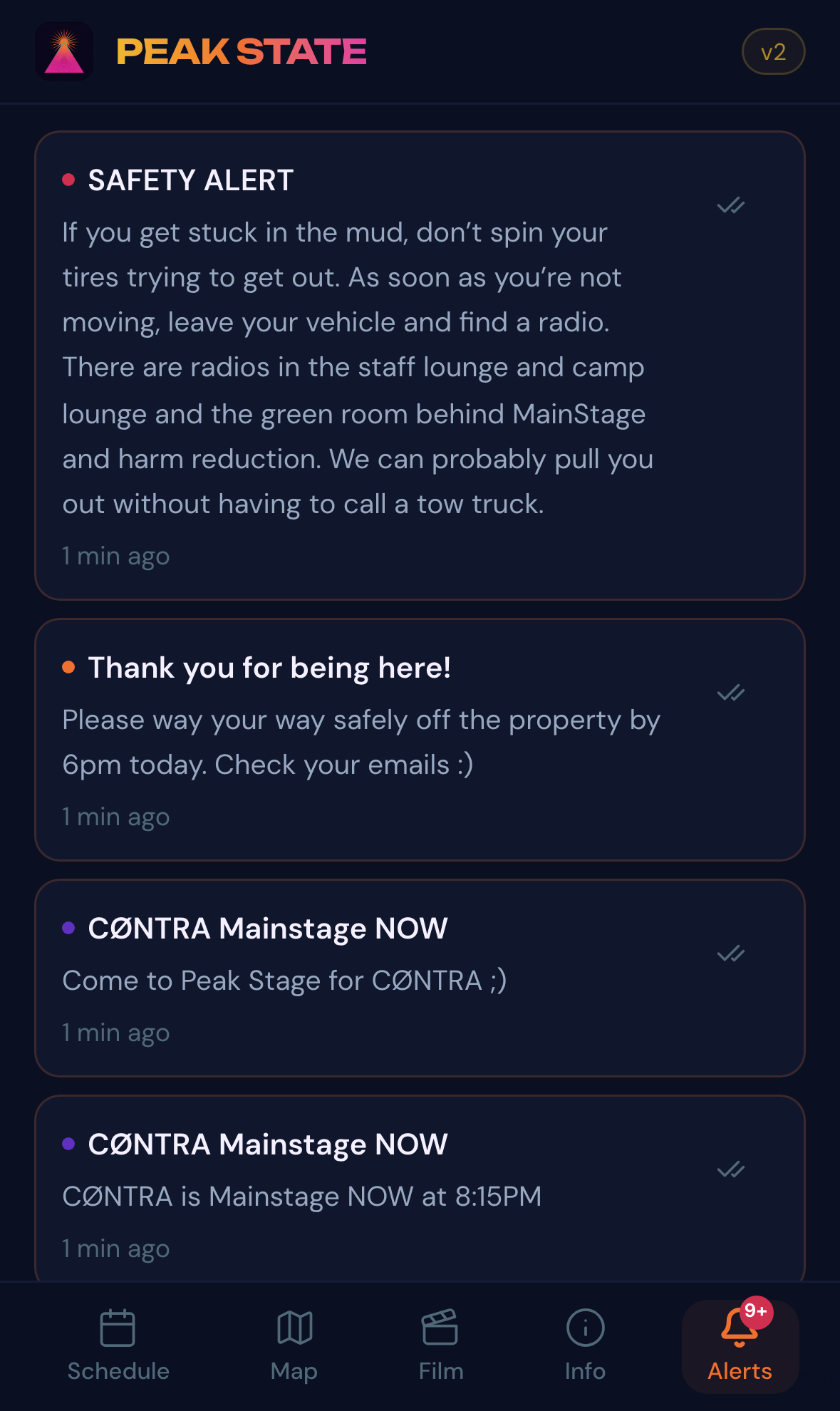

Alerts

Alerts

Sheet → Worker → KV → app. Edit a cell, see it in 60 seconds.

The whole thing runs on a Google Sheet. Six tabs, six endpoints, one Cloudflare Worker that polls them on a cron and caches the JSON in KV. The app reads from the Worker, not the Sheet. The Sheet is the CMS. Non-technical operators (Jesse, Eric, Areen) edited live during the festival without a deploy.

- React 19.2 + Vite 7.3 + TypeScript 5.9

- Tailwind 4.1 for styling, Zustand 5 for state, Dexie 4 (IndexedDB) for offline persistence

- Leaflet for the map, vite-plugin-pwa for the service worker + manifest

- Unbounded (display) + DM Sans (body), pulled from the official Peaking PSD

- Cloudflare Worker with KV cache, polling Google Sheets API on a 1-minute cron during festival (5-min pre-fest)

- Six tabs in the CMS sheet (Alerts, Artists, Set Times, Film, Scenes, Info) feeding the five app tabs

- Custom wide-grid parser for the 8-column workshop schedule (2 header rows + 4 day-pairs)

fetchSheetCSVByNameresolver: fetch by tab name, not gid

- Cloudflare Pages at app.didyoupeakyet.com via CNAME from the festival's Wix DNS

- Netlify mirror at travisfixes.com/peak-state-aa57b5cf/ as a fallback

- PWA installable on iOS and Android, custom icons extracted from the official logo, splash screen rendered from the Peaking poster

- Dropdown menus on every input: stage, day, type, alert type, active, featured, secret

- Color-coded tabs, frozen headers, header row protection

- README tab in plain English so a non-technical operator can edit on their first day

- Cell notes on every column header explaining intent

What this actually cost to build.

28-day calendar window from ask to live; 10 of those days were active build days; ~30 hours of focused work across them. Claude is a hard number from my own attribution tracker (claude-fuel). Gemini, ChatGPT, and Grok ran lighter as research and sanity-check sub-agents; their API spend is estimated from observed volumes at published per-token rates.

// API spend breakdown: Claude $760 (hard) · Gemini ~$30 · ChatGPT ~$20 · Grok ~$15

Build timeline by lane

Mar 19 to Apr 19. Each lane is one agent (or me). Block opacity tracks intensity that day: brighter blocks are heavier work. Hover for detail.

prompts

API spend

est. API

est. API

est. API

Workers

Reactions from the people running it.

Declined the contract. Kept building. Kept the IP.

Three weeks in, a Volunteer Deal Memorandum and Personal Release arrived from Synchronica LLC, the production company behind Peaking. Section 5.0 Work-for-Hire language would have transferred the app, the Worker, the CMS, and every piece of infrastructure I'd built, in perpetuity, all media, with no obligation to credit me.

My counsel flagged 15 amendments across roughly 7 sections. I offered redlines to their lawyer. The response: "She won't accept any redlines. Maybe just come as my guest." I said yes. Medical complications kept me from attending in the end.

The app stayed on my infrastructure. The IP stayed mine. The portfolio stays mine. The relationship stays intact.

Full timeline. Every milestone, every hotfix.

Reconstructed from the production thread, the Claude attribution tracker, and iMessage with the operations team. Build days color-coded by day-of-week. Times in CT.

· Stage-off banners (per-filter) + StageOverview "now at each stage"

· Event reminders w/ vibration 15 min before favorited sets

· Schedule conflict detection on overlapping favorites

· Walking time between stages via Haversine (~60m/min)

· Lock-screen lineup export (canvas render + Web Share API)

· Offline map fallback with tile-error detection

· Type column on Set Times, Stage Off rows auto-filtered to /api/stage-status

A few things I'm taking into the next app build (or similar project). None of these are the kind of bug you find in a stack trace; they're process gaps that only show up when the lights are flashing.

// SEED-FROM-STATIC: replace before launch. Pre-launch checklist greps for those tags. None ship to production.